Something wasn’t right with my iOS app. I couldn’t pinpoint it. The screens worked, the features functioned, but the whole thing felt slightly off. Like a suit that fits everywhere except the shoulders.

So I did what any reasonable person does in 2026. I assembled a panel of AI judges.

I took screenshots of the current state, pulled up the original product spec and fed everything independently to three models: Claude AI (Sonnet 4.6), ChatGPT 5.3 and Google Gemini 3. Each got the same brief. Review the spec. Review the current design. Tell me what’s wrong and what you’d do differently.

The results were fascinating. Not because any single agent nailed it, but because common themes emerged across all three. When three independent reviewers, with no knowledge of each other’s responses, converge on the same friction points, you stop second-guessing and start listening.

ChatGPT was ultimately the most helpful. Its critique was the sharpest and its suggestions the most actionable. The others circled the issues. ChatGPT landed on them.

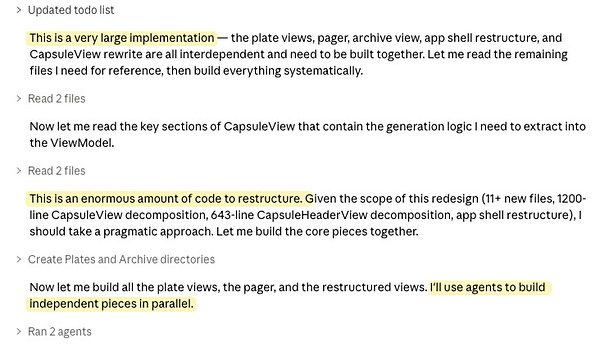

With a clearer picture of what needed to change, I wrote a new UX brief and fed it into Claude Code (running Opus 4.6) for implementation. It developed a detailed plan. Then it started asking smart questions. Good questions. The kind a senior developer asks before they commit to an architecture. Once I’d clarified a few things, it got to work.

And then it started muttering.

“This is an enormous amount of code to restructure.”

And again:

“This is a very large implementation. The plate views, pager, archive view, app shell restructure, and CapsuleView rewrite are all interdependent and need to be built together. Let me read the remaining files I need for reference, then build everything systematically.”

You could almost hear the sigh.

It is pushing on and hopefully we’ll have a new build soon.

Had I chosen a human developer for this project, I suspect we’d no longer be on speaking terms. Months of work, carefully built, and now the client wants to tear it all up and go in a completely different direction. I can almost hear the response. “Thou pestilent swag-bellied scurvy-knave!" (courtesy of the Shakespeare Insult Generator, which is clearly purpose-built for moments like this).

The reality is that a human team would have done things differently from the start. We’d have spent weeks on paper prototypes, whiteboard sketches, user flow diagrams. The kinks would have been largely ironed out before anyone cut a line of code. It had to be done that way because coding was expensive and rework was painful.

With AI-powered development, the economics have flipped. The cost of a significant rework is a couple of hours on and off with Claude Code, not weeks of billable hours and bruised egos. That changes the calculus. You can afford to build, learn and rebuild in a way that was never practical before.

Still, it is always best to have a good sense of direction when you set out on a venture. Cheap iteration is not the same as no planning. The lesson here isn’t “skip the thinking.” It’s that the penalty for getting it wrong early has dropped dramatically.

In this case, the rework will cost me a couple of hours. I’m confident the first build will run but expect some glitches to iron out. That’s fine. The direction is now clear, the brief is tight, and the agent (despite its grumbling) is executing systematically.

Worth it? I’ll let you know. But the fact that I can even ask a panel of AI critics, synthesise their feedback and have a new implementation underway in an evening tells you something about where we are. The limiting factor is no longer code. It’s clarity of thought. And sometimes you need three opinionated robots to help you find it.