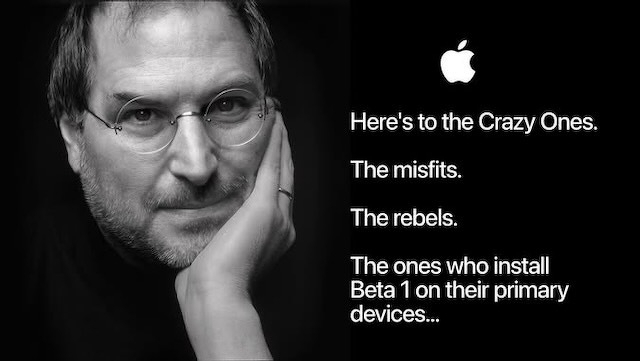

Life is too short for beta software

Tempted as I am I will not, must not, dabble with beta software.

Perhaps I need this etched on my mouse to remind me?

Tempted as I am I will not, must not, dabble with beta software.

Perhaps I need this etched on my mouse to remind me?

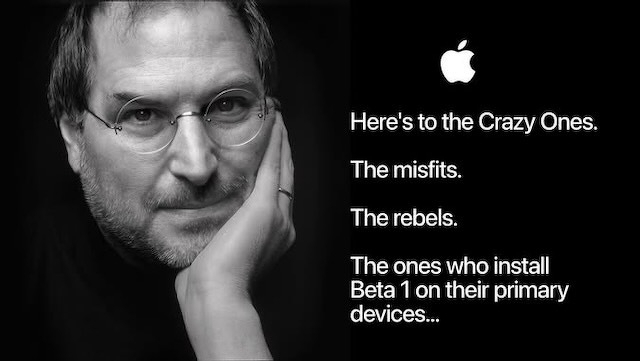

At WWDC 2026 this week, someone asked Siri to go through a folder of contractor quotes as PDFs, compare them, pick the best option and draft a reply email. Siri did it. Live, on stage, in front of an audience.

At WWDC 2026 this week, someone asked Siri to go through a folder of contractor quotes as PDFs, compare them, pick the best option and draft a reply email. Siri did it. Live, on stage, in front of an audience.

That’s not a kitchen timer. That’s not “Hey Siri, what’s the weather.” That’s the kind of task you’d currently hand to Claude or ChatGPT with careful prompting and a bit of luck. Apple just demonstrated it happening through a voice assistant most of us had written off.

It is worth understanding how they got there - because the architecture behind it is genuinely interesting, and a lot of it comes down to a clever solution to a very unglamorous problem: memory.

Apple didn’t announce anything revolutionary at WWDC. For a company of this maturity, that’s not a criticism - it’s a read of the room.

Apple didn’t announce anything revolutionary at WWDC. For a company of this maturity, that’s not a criticism - it’s a read of the room.

The focus was platform optimisation and extension: making what already exists faster, smarter and less visually exhausting. Yes, they’ve walked back some of the Liquid Glass overload. Good.

S&P 500 rejects SpaceX, also blocking entry for OpenAI and Anthropic - Ars Technica

Twelve months ago the most capable model you could rent was Claude Opus 4, at $15 in and $75 out per million tokens. Today the flagship Opus costs a third of that: $5 in, $25 out. So the price of frontier AI collapsed over the year, right?

Twelve months ago the most capable model you could rent was Claude Opus 4, at $15 in and $75 out per million tokens. Today the flagship Opus costs a third of that: $5 in, $25 out. So the price of frontier AI collapsed over the year, right?

Not even slightly. It went up. You just have to know where to look, and who is paying.

Microsoft’s annual developer conference, Build, kicked off at 3am Melbourne time on Wednesday. I didn’t stay up to watch - but I’ve absorbed the media releases and technical docs, and there’s a genuine shift happening here that’s worth unpacking.

Microsoft’s annual developer conference, Build, kicked off at 3am Melbourne time on Wednesday. I didn’t stay up to watch - but I’ve absorbed the media releases and technical docs, and there’s a genuine shift happening here that’s worth unpacking.

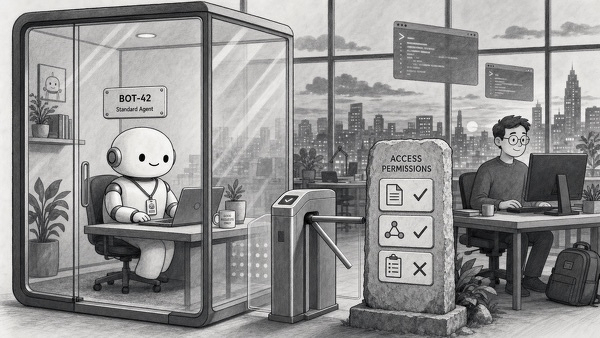

TL;DR for the non-technical: AI assistants are about to get much more capable, but that creates a trust problem - how do you let a smart assistant do things on your computer without giving it the keys to everything? Microsoft just announced that Windows itself will act as the security guard. It will control exactly what an AI assistant can see and touch on your machine, track what it does separately from what you do and run smaller AI models directly on your computer so your data doesn’t have to leave your desk. Think of it as giving your AI assistant its own office with its own keycard, instead of letting it wander freely through yours. The catch: it needs newer, more powerful hardware to work properly, and most of it isn’t shipping yet.

Now, the details.

The Tifosi are furious, and they are aiming at the wrong target. The Ferrari Luce, Maranello’s first production electric car, was unveiled near Rome on 25 May to a wave of revulsion. “A Nissan Leaf with a prancing horse,” said the internet. Nissan, delighted, leaned in and thanked Ferrari for the compliment. The market was less amused. Ferrari shares fell more than eight per cent in Milan and over five per cent in New York the next morning. For a marque that has sold beauty for almost eighty years, that is a remarkable thing to do with a single reveal.

The Tifosi are furious, and they are aiming at the wrong target. The Ferrari Luce, Maranello’s first production electric car, was unveiled near Rome on 25 May to a wave of revulsion. “A Nissan Leaf with a prancing horse,” said the internet. Nissan, delighted, leaned in and thanked Ferrari for the compliment. The market was less amused. Ferrari shares fell more than eight per cent in Milan and over five per cent in New York the next morning. For a marque that has sold beauty for almost eighty years, that is a remarkable thing to do with a single reveal.

But the styling is not the story. The styling is a symptom.

A Chinese carmaker just out-engineered the people who invented the prestige limousine.

The Nio ET9 is the proof. Watch what its suspension does to a broken road, then watch a BMW or an Audi attempt the same. One glides. The others crash about like farm equipment.

Nio pitches the ET9 as an executive flagship and the cabin earns it: four seats, reclining rear thrones, a fridge and screens everywhere. Nio reckons the ride is “comparable to cruising in a business jet”. On this evidence that is not the usual launch-day hyperbole.

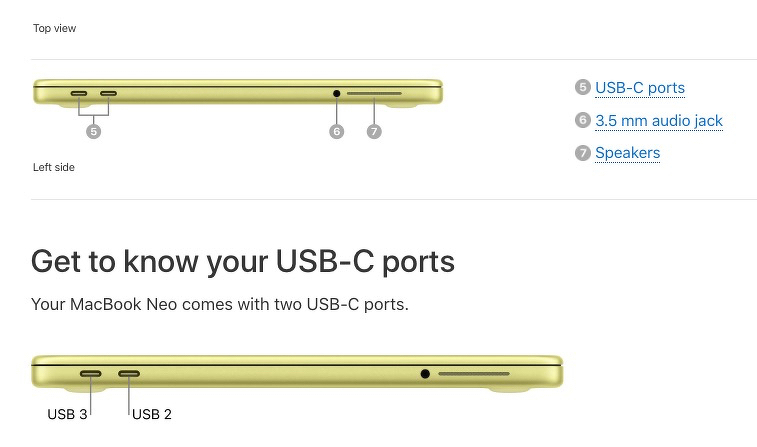

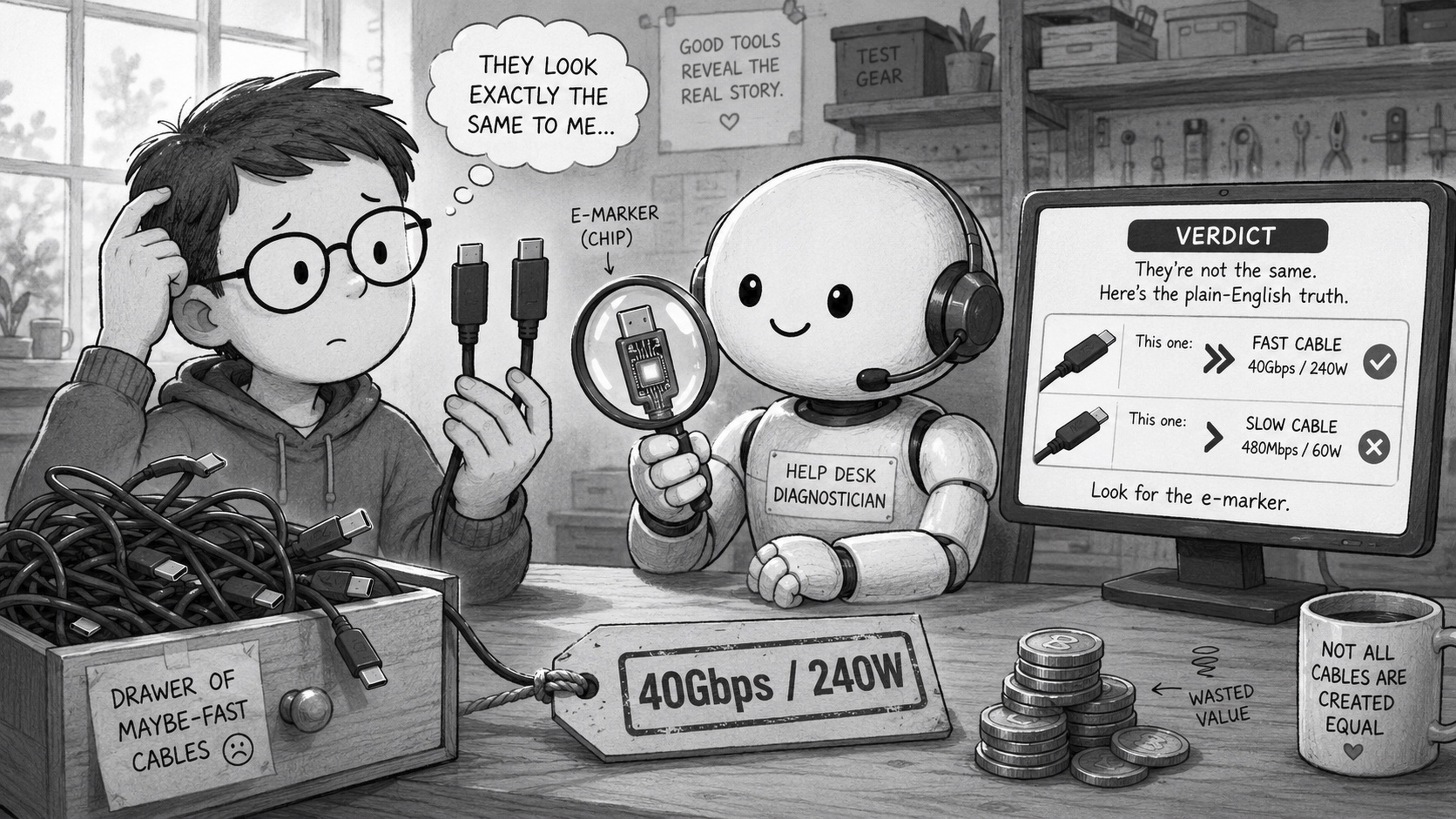

Last time it was the cables lying to you. You binned the mystery leads, bought the certified ones with the speed and watts printed on the side, labelled the survivors. Good. You fixed the drawer.

Now look at the laptop itself, because it is about to play the same trick on you. Two ports, same oval socket, same confident silver moulding. One is fast. One is not. And nothing on the outside tells you which is which.

Open the drawer where you keep your cables. Go on. Somewhere in that tangle are two USB-C cables that look identical, came in similar boxes and feel the same in your hand. One will charge your laptop at full speed and drive a 4K monitor. The other can barely run a mouse.

Open the drawer where you keep your cables. Go on. Somewhere in that tangle are two USB-C cables that look identical, came in similar boxes and feel the same in your hand. One will charge your laptop at full speed and drive a 4K monitor. The other can barely run a mouse.

The connector tells you nothing. That is the whole problem.