This is the technical companion to my earlier post about building Felix, my personal bookmark manager. That post covered the why and what. This one covers the how.I’m not a developer, so these choices came from conversations with Claude Code about what would work best for a local-first personal tool.

The stack

Backend: Flask 3.0, SQLAlchemy, SQLite. Flask over FastAPI because it’s simpler for a single-user app. SQLite over PostgreSQL because there’s no need for a separate database server — everything lives in one file I can back up.

AI: Anthropic Claude API using the Haiku model. Fast enough for batch processing, cheap enough that 750 bookmarks costs cents.

Content extraction: Trafilatura for web scraping. It handles the messy reality of pulling article text from arbitrary URLs.

Background jobs: APScheduler for hourly summarisation and weekly exports. The app keeps working while I sleep.

Frontend: HTMX + Alpine.js. No React or Vue complexity. The interface is minimal — inspired by macOS Finder — and doesn’t need a JavaScript framework.

Implementation phases

Claude estimated 15-20 hours. We finished in just over 6.

Phase 1: Core Infrastructure (estimated 3-4 hours)

Flask web framework setup. SQLite database with SQLAlchemy ORM. Basic bookmark CRUD operations. Minimalist UI.

Phase 2: Multi-Source Import (estimated 4-5 hours)

Feedbin API integration with OAuth-free authentication. Safari Reading List extraction. Browser bookmarklet for one-click saving. Server-Sent Events for real-time import progress.

Phase 3: AI Integration (estimated 3-4 hours)

Claude API integration. Content extraction with Trafilatura. Batch summarisation with retry logic. Keyword extraction from content.

Phase 4: Search & Organisation (estimated 2-3 hours)

Full-text search across all fields. Filtering by source, date range, summarisation status. Clickable keywords for discovery. Statistics dashboard showing trends and top domains.

Phase 5: Export Automation (estimated 3-4 hours)

Markdown export formatted for Obsidian with hashtags. JSON, TSV, and HTML export formats. Scheduled weekly exports to Obsidian vault.

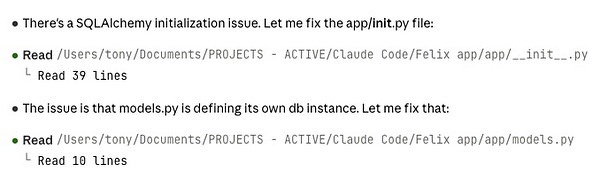

(Claude Code in action - finding issues and fixing them on the run.)

A design decision worth noting

Bookmarks use their publication date not import date. This matters for chronological accuracy. An article published six months ago shouldn’t appear as “new” just because I starred it today.

Daily workflow

Add bookmarks by

- Put in ‘Reading List’ in Safari on Mac or iOS - synced by iCloud

- Star as favourite in NetNewsWire on Mac or iOS or in Feedbin

- Add in browser via bookmarklet (Chrome or Safari)

- Manually enter into Felix

Morning routine: Open Felix at localhost:5001. The background scheduler has already imported overnight Feedbin stars.

Browse new bookmarks: See the list with 350-450 character summaries already generated.

Search and filter: Use keyword search to find articles about “Python async” or filter by domain.

Add notes: Click a bookmark to open the detail panel, add my own thoughts.

Weekly export: Every Monday, previous week’s bookmarks automatically export to my Obsidian vault as individual markdown files.

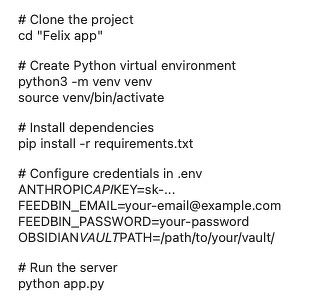

Running it yourself

This is tightly coupled to my setup. You’d need:

Then visit http://localhost:5001 in your browser.

What would make it shareable

To turn Felix into something others could use, I’d need to package it as a native Mac app (Electron or PyInstaller), add OAuth flows for Feedbin instead of password storage, support multiple users with account management, deploy a hosted version, add onboarding for non-technical users, and support more sources like Raindrop.io, Instapaper and browser sync.

For now it’s a personal tool that fits my workflow exactly. The value comes from my curated feed list. Someone else’s feeds would produce different results.

Performance notes

750 bookmarks imported. About 90% AI success rate. Processing speed 30-60 seconds per batch of 10 bookmarks. API cost roughly $0.50 per month. Database size 2MB. Search is instant at this scale and should handle 10,000+ bookmarks.

Confession: The 6 hour build was a bit obsessive on my part. Frantic co-coding from 4:40PM - 5:57PM had me trip Claude’s rate limit. “You’ve hit the limit. Come back at 9PM for more.” I couldn’t wait so coughed up for Claude Max plan (A$169.99/mth). This could prove to be yet another expensive hobby.

See Part 1 for the non-technical version: why I built Felix and what I learned from the process.

A nod to the history of Felix the Cat from The National Film and Sound Archive of Australia